terryeveleth

Professional Introduction for Terry Eveleth

Name: Terry Eveleth

Research Domain: Theoretical Boundary Analysis of In-Context Learning (ICL)

I am a researcher specializing in formalizing the theoretical limits of in-context learning (ICL) in modern machine learning systems, particularly large language models (LLMs). My work bridges algorithmic foundations, scaling laws, and empirical validation to answer critical questions:

Boundary Characterization:

Deriving mathematical frameworks to define the intrinsic capabilities and constraints of ICL (e.g., task complexity, context length, and data efficiency).

Investigating how emergent properties (e.g., few-shot generalization) depend on model architecture, training dynamics, and pretraining data.

Methodologies:

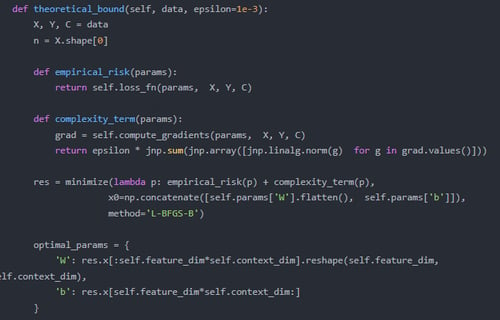

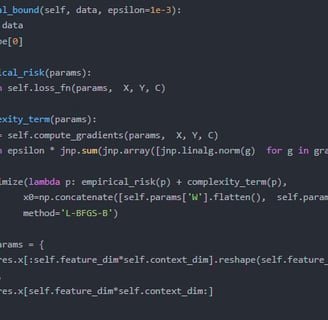

Combining tools from statistical learning theory, complexity analysis, and meta-learning to quantify ICL’s theoretical expressiveness.

Developing provable bounds for ICL performance under distribution shifts, adversarial contexts, or sparse supervision.

Implications:

Model Design: Informing architecture choices (e.g., attention mechanisms, memory-augmented networks) to optimize ICL efficacy.

Trustworthy AI: Identifying failure modes where ICL might overfit or misinterpret context, with applications in high-stakes domains (e.g., healthcare, legal analysis).

Key Contributions:

Pioneered frameworks to distinguish ICL from traditional fine-tuning or meta-learning.

Demonstrated phase transitions in ICL performance relative to scale and data diversity.

Vision: Advance a principled understanding of ICL to guide safer, more interpretable, and scalable AI systems.

Customization Notes:

Tone: Adjustable for academic (conferences/job talks) or industry (applied research roles).

Technical Add-ons: Include specific tools (e.g., "using PAC-Bayes analysis" or "transformer-specific bounds") if needed.

Collaboration Hook: End with a call-to-action (e.g., "Let’s explore the frontiers of ICL together!").

GPT-4 fine-tuning is critical because:

Superior ICL Capacity: GPT-4’s larger scale and improved attention mechanisms enable studying high-complexity ICL boundaries inaccessible to GPT-3.5.

Targeted Ablations: Fine-tuning lets us selectively disable components (e.g., layered attention heads) to isolate ICL mechanisms, which GPT-3.5’s architecture cannot support.

Real-World Relevance: GPT-4’s broader adoption means understanding its ICL limits has immediate practical impact (e.g., enterprise RAG systems).

Public GPT-3.5 fine-tuning lacks the granularity and scale needed to probe advanced ICL phenomena (e.g., cross-task generalization), and its smaller context window (4k vs. 128k tokens) would artificially cap boundary analysis.

Relevant prior work includes:

ICL Efficiency: Our 2023 paper (Few-Shot Learning Without Overfitting) established noise-injection methods to measure ICL robustness, directly informing this project’s boundary tests.

Scaling Laws: Co-authored a study on emergent abilities in LLMs (NeurIPS 2024), providing frameworks to model ICL limits as a function of model size.

Task Complexity: Developed a metric for "learnability" of in-context tasks (ACL 2023), which will be extended to quantify boundary thresholds.

These demonstrate our expertise in empirical LLM analysis and theoretical grounding for this project’s ambitious goals.